Journal Club & Progress Club

In our Journal Club lab members take turns presenting papers they find interesting and optimally others may also find interesting and useful. In the Progress Club lab members present the state of their research. In the new format one Presenter per week will give both presentations. The target time frame for the Journal Club presentation is 12-15 minutes as that is often what the time slots at conferences are. The length of the progress report obviously depends on the amount of progress since the last one but 5-10 minutes should be a good guideline. As a reminder, here are the points from my announcement email regarding the new format:

- We will have one presenter per week

- The presenter will give both a Journal Club presentation as well as present his or her progress.

- The target times for the presentations remain 12-15 minutes for the JC and 5-10 minutes for PC.

PC times may vary more because the amount of progress is quite variable. - As a rule of thumb, do not prepare more than one slide per minute, less is of course possible.

- Whenever possible, we will try to hold discussions after the corresponding talks, not during. Questions for comprehension can be asked during the talk

- We will still have student presentation days.

- We will not try to continue the old cycle but start clean with a new one. That may lead to some of us having larger and some having smaller breaks between presentations.

Schedule

| Date | Presenter |

| 07.02 | |

| 31.01 | |

| 24.01. | |

| 17.01. | |

| 10.01 | |

| 20.12 | Susanne |

| 13.12. | Nique |

| 06.12. | Student |

| 29.11. | Felix |

Gulletta, Gianpaolo, Eliana Costa e Silva, Wolfram Erlhagen, Ruud Meulenbroek, Maria Fernanda Pires Costa, and Estela Bicho. “A Human-like Upper-Limb Motion Planner: Generating Naturalistic Movements for Humanoid Robots.” International Journal of Advanced Robotic Systems, (March 2021). https://doi.org/10.1177/1729881421998585.

As robots are starting to become part of our daily lives, they must be able to cooperate in a natural and efficient manner with humans to be socially accepted. Human-like morphology and motion are often considered key features for intuitive human–robot interactions because they allow human peers to easily predict the final intention of a robotic movement. Here, we present a novel motion planning algorithm, the Human-like Upper-limb Motion Planner, for the upper limb of anthropomorphic robots, that generates collision-free trajectories with human-like characteristics. Mainly inspired from established theories of human motor control, the planning process takes into account a task-dependent hierarchy of spatial and postural constraints modelled as cost functions. For experimental validation, we generate arm-hand trajectories in a series of tasks including simple point-to-point reaching movements and sequential object-manipulation paradigms. Being a major contribution to the current literature, specific focus is on the kinematics of naturalistic arm movements during the avoidance of obstacles. To evaluate human-likeness, we observe kinematic regularities and adopt smoothness measures that are applied in human motor control studies to distinguish between well-coordinated and impaired movements. The results of this study show that the proposed algorithm is capable of planning arm-hand movements with human-like kinematic features at a computational cost that allows fluent and efficient human–robot interactions.

Presented on 02.06.2020 by Susanne Blex

Paper:

https://journals.sagepub.com/doi/full/10.1177/1729881421998585

Gulletta, Gianpaolo, et al. “Human-Like Arm Motion Generation: A Review.” Robotics, vol. 9, no. 4, Dec. 2020, p. 102, doi:10.3390/robotics9040102.

In the last decade, the objectives outlined by the needs of personal robotics have led to the rise of new biologically-inspired techniques for arm motion planning. This paper presents a literature review of the most recent research on the generation of human-like arm movements in humanoid and manipulation robotic systems. Search methods and inclusion criteria are described. The studies are analyzed taking into consideration the sources of publication, the experimental settings, the type of movements, the technical approach, and the human motor principles that have been used to inspire and assess human-likeness. Results show that there is a strong focus on the generation of single-arm reaching movements and biomimetic-based methods. However, there has been poor attention to manipulation, obstacle-avoidance mechanisms, and dual-arm motion generation. For these reasons, human-like arm motion generation may not fully respect human behavioral and neurological key features and may result restricted to specific tasks of human-robot interaction. Limitations and challenges are discussed to provide meaningful directions for future investigations.

Presented on 31.03.2020 by Susanne Blex

Paper:

In my current project I encountered a stumbling block: inefficient/insufficient exploration. While not really useful as a solution to my problem I finally read this paper I had wanted to for a while. Here is the abstract:

Yuri Burda*, Harrison Edwards*, Oleg Klimov (all OpenAI), Amos Storkey (Univ. of Edinburgh) *main authors

We introduce an exploration bonus for deep reinforcement learning methods that is easy to implement and adds minimal overhead to the computation performed. The bonus is the error of a neural network predicting features of the observations given by a fixed randomly initialized neural network. We also introduce a method to flexibly combine intrinsic and extrinsic rewards. We find that the random network distillation (RND) bonus combined with this increased flexibility enables significant progress on several hard exploration Atari games. In particular we establish state of the art performance on Montezuma’s Revenge, a game famously difficult for deep reinforcement learning methods. To the best of our knowledge, this is the first method that achieves better than average human performance on this game without using demonstrations or having access to the underlying state of the game, and occasionally completes the first level.

Links:

Validating appropriateness and naturalness of human-robot interaction (HRI) is commonly performed by taking subjective measures from human interaction partners, e.g. questionnaire ratings. Although these measures can be of high value for robot designers, they are very sensitive and can be inaccurate and/or biased. In this paper we propose and validate a neuro-based method for objectively validating robot behavior in HRI. We propose to detect from the electronencephalogram (EEG) of a human interaction partner, the perception of inappropriate/unexpected/erroneous robot behavior. To validate this method, we conducted an EEG experiment with a simplified HRI protocol in which a humanoid robot displayed context-dependent erroneous behavior from time to time. The EEG data taken from 13 participants revealed biologically plausible error-related potentials (ErrP) whose spatio-temporal distributions match well with related neuroscientific research. We further demonstrate that perceived erroneous robot action can reliably be modeled and detected from human EEG signals with classification accuracies on avg. 69.7±9.1%. These findings confirm principal feasibility of the proposed method and suggest that EEG-based ErrP detection can be used for quantitative evaluation and thus improvement of robot behavior

Presented on 13.01.2020 by Aline Xavier Fidencio

Paper:

Brain–machine interfaces (BMIs) are promising devices that can be used as neuroprostheses by severely disabled individuals. Brain surface electroencephalograms (electrocorticograms, ECoGs) can provide input signals that can then be decoded to enable communication with others and to control intelligent prostheses and home electronics. However, conventional systems use wired ECoG recordings. Therefore, the development of wireless systems for clinical ECoG BMIs is a major goal in the field. We developed a fully implantable ECoG signal recording device for human ECoG BMI, i.e., a wireless human ECoG-based real-time BMI system (W-HERBS). In this system, three-dimensional (3D) high-density subdural multiple electrodes are fitted to the brain surface and ECoG measurement units record 128-channel (ch) ECoG signals at a sampling rate of 1 kHz. The units transfer data to the data and power management unit implanted subcutaneously in the abdomen through a subcutaneous stretchable spiral cable. The data and power management unit then communicates with a workstation outside the body and wirelessly receives 400 mW of power from an external wireless transmitter. The workstation records and analyzes the received data in the frequency domain and controls external devices based on analyses. We investigated the performance of the proposed system. We were able to use W-HERBS to detect sine waves with a 4.8-µV amplitude and a 60–200-Hz bandwidth from the ECoG BMIs. W-HERBS is the first fully implantable ECoG-based BMI system with more than 100 ch. It is capable of recording 128-ch subdural ECoG signals with sufficient input-referred noise (3 µVrms ) and with an acceptable time delay (250 ms). The system contributes to the clinical application of high-performance BMIs and to experimental brain research.

Presented on 09.12.2020 by Sebastian Doliwa

Paper:

https://www.frontiersin.org/articles/10.3389/fnins.2018.00511/full

1.Human muscle fatigue has been studied using a wide variety of exercise models, protocols and assessment methods. Based on the definition of fatigue as ‘any reduction in the maximal capacity to generate force or power output’, the different methods to measure fatigue are discussed. It is argued that reliable and valid measures must include either assessment of maximal voluntary contraction force or power, or the force generated by electrical stimulation. By comparing tetanic stimulation and maximal voluntary contraction force one may reveal whether fatigue is of central origin, or whether peripheral mechanisms are involved. Adequate use of twitch interpolation provides an even more sensitive measure for central fatigue. Indirect methods as endurance times and electromyography show variable responses during exercise and no close relationship to fatigue. Hence these methods are of limited value in measurement of human muscle fatigue.

2.Muscle fatigue is a common experience in daily life. Many authors have defined it as the incapacity to maintain the required or expected force, and therefore, force, power and torque recordings have been used as direct measurements of muscle fatigue. In addition, the measurement of these variables combined with the measurement of surface electromyography (sEMG) recordings (which can be measured during all types of movements) during exercise may be useful to assess and understand muscle fatigue. Therefore, there is a need to develop muscle fatigue models that relate changes in sEMG variables with muscle fatigue. However, the main issue when using conventional sEMG variables to quantify fatigue is their poor association with direct measures of fatigue. Therefore, using different techniques, several authors have combined sets of sEMG parameters to assess muscle fatigue. The aim of this paper is to serve as a state-of-the-art summary of different sEMG models used to assess muscle fatigue. This paper provides an overview of linear and non-linear sEMG models for estimating muscle fatigue, their ability to assess power loss and their limitations due to neuromuscular changes after a training period.

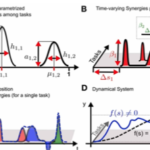

A salient feature of human motor skill learning is the ability to exploit similarities across related tasks. In biological motor control, it has been hypothesized that muscle synergies, coherent activations of groups of muscles, allow for exploiting shared knowledge. Recent studies have shown that a rich set of complex motor skills can be generated by a combination of a small number of muscle synergies. In robotics, dynamic movement primitives are commonly used for motor skill learning. This machine learning approach implements a stable attractor system that facilitates learning and it can be used in high-dimensional continuous spaces. However, it does not allow for reusing shared knowledge, i.e., for each task an individual set of parameters has to be learned. We propose a novel movement primitive representation that employs parametrized basis functions, which combines the benefits of muscle synergies and dynamic movement primitives. For each task a superposition of synergies modulates a stable attractor system. This approach leads to a compact representation of multiple motor skills and at the same time enables efficient learning in high-dimensional continuous systems. The movement representation supports discrete and rhythmic movements and in particular includes the dynamic movement primitive approach as a special case. We demonstrate the feasibility of the movement representation in three multi-task learning simulated scenarios. First, the characteristics of the proposed representation are illustrated in a point-mass task. Second, in complex humanoid walking experiments, multiple walking patterns with different step heights are learned robustly and efficiently. Finally, in a multi-directional reaching task simulated with a musculoskeletal model of the human arm, we show how the proposed movement primitives can be used to learn appropriate muscle excitation patterns and to generalize effectively to new reaching skills.

Presented on 30.09.2020 by Tim Sziburis

Paper:

https://www.frontiersin.org/articles/10.3389/fncom.2013.00138/full

Error-related potentials (ErrPs) are the neural signature of error processing. Therefore, the detectionof ErrPs is an intuitive approach to improve the performance of brain-computer interfaces (BCIs). The incorporation of ErrPs in discrete BCIs is well established but the study of asynchronous detection of ErrPs is still in its early stages. Here we show the feasibility of asynchronously decoding ErrPs in an online scenario. For that, we measured EEG in 15 participants while they controlled a robotic arm towards a target using their right hand. In 30% of the trials, the control of the robotic arm was halted at an unexpected moment (error onset) in order to trigger error-related potentials. When an ErrP was detected after the error onset, participants regained the control of the robot and could finish the trial. Regarding the asynchronous classification in the online scenario, we obtained an average true positive rate (TPR) of 70% and an average true negative rate (TNR) of 86.8%. These results indicate that the online asynchronous decoding of ErrPs was, on average, reliable, showing the feasibility of the asynchronous decoding of ErrPs in an online scenario.

Presented on 16.09.2020 by Aline Xavier Fidencio

Paper:

Movement primitives are elementary motion units and can be combined sequentially or simultaneously to compose more complex movement sequences. A movement primitive timeseries consist of a sequence of motion phases. This progression through a set of motion phases can be modeled by Hidden Markov Models (HMMs). HMMs are stochastic processes that model time series data as the evolution of a hidden state variable through a discrete set of possible values, where each state value is associated with an observation (emission) probability. Each motion phase is represented by one of the hidden states and the sequential order by their transition probabilities. The observations of the MP-HMM are the sensor measurements of the human movement, for example, motion capture or inertial measurements. The emission probabilities are modeled as Gaussians. In this chapter, the MP-HMM modeling framework is described and applications to motion recognition and motion performance assessment are discussed. The selected applications include parametric MP-HMMs for explicitly modeling variability in movement performance and the comparison of MP-HMMs based on the loglikelihood, the Kullback–Leibler divergence, the extended HMM-based F-statistic, and gait-specific reference-based measures.

Presented on 15.07.2020 by Marie Schmidt

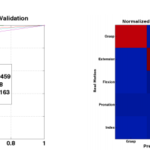

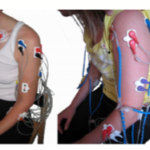

This work has been conducted in the context of pattern-recognition-based control strategies for electromyographic prostheses. It focuses on the conceptual design, implementation and validation of learning techniques based on the k-nearest neighbour (kNN) scheme for gesture recognition. After theoretical considerations and the identification of the topic within the contexts of prosthetic control, biomedical signals — specifically electromyography (EMG) –, and machine learning, particular state-of-research concepts are pointed out. With regard to nearest-neighbour-classification this also concerns methods for dataset reduction in order to cope with the problem of high computational demands in the prediction phase of instance-based learning. Requirements for a kNN-based learning scheme suitable in the field of surface-EMG-controlled prosthetics are specified, comprising high accuracy and success rates, as well as incrementality, and proportionality for not explicitly learned gestures (intermediate levels of intensity). Furthermore, the requirement for an applicability of the proposed methods on embedded systems is stated for an extended approach, considering real-time behaviour and determinism. On the one hand, these requirements are evaluated by theoretical examination. On the other hand, the methods proposed are practically implemented. Datasets are captured by means of a state-of-the-art eight-channel-EMG armband positioned on the forearm to test the implementation. Based on this data, the influence of kNN’s main parameter k on block-wise cross-validation accuracy is analyzed while furthermore varying weighting factors and distance metrics. In addition, the effect of windowing concepts is investigated. Moreover, the effect of varying proportionality schemes is investigated, regarding both cross-validation accuracy and furthermore success rate in pilot experiments. These are conducted as online target achievement tests, moreover incorporating the evaluation of thresholding schemes for kNN classification. Additionally, an assessment of different dataset reduction techniques’ adequacy for embedded control applications is made by applying kNN on the reduced prototype set and analyzing the cross-validation accuracy as well as the timing behaviour when using captured EMG data. Among these methods, the Decision Surface Mapping algorithm (DSM) proves itself as most suitable. Furthermore, a randomized, double-blind user study is conducted in order to compare the implemented methods, namely kNN with a specific set of parameters and kNN after applying prototype generation via DSM, with the state-of-research algorithms Ridge Regression as well as Ridge Regression with Random Fourier Features. The results from these experiments show a statistically significant improvement in favour of the kNN-based algorithms in comparison to the ridge-regression-based techniques. Notably, the approach of kNN applied on the DSM-reduced set achieves higher success rates than the original technique in some cases. Although the difference between kNN and DSM-kNN has no statistical significance, it is remarkable in consideration of only using seven prototype samples in the reduced set in total, thus yielding a reduction rate of over 99% while preserving accuracy. With k set to 1 – which turned out to be an excellent choice – the running time complexity of both kNN (in every prediction step) as well as DSM-kNN (in the training phase) can be considered as linear with respect to the number of original samples, speaking in favor of an embedded applicability.

Presented on 17.06.2020 by Tim Sziburis

Electromyographic (EMG) processing is a vital step towards converting noisy muscle activation signals into robust features that can be decoded and applied to applications such as prosthetics, exoskeletons, and human-machine interfaces. Current state of the art processing methods involve collecting a dense set of features which are sensitive to many of the intra- and intersubject variability ubiquitous in EMG signals. As a result, state of the art decoding methods have been unable to obtain subject independence. This paper presents a novel multiresolution muscle synergy (MRMS) feature extraction technique which represents a set of EMG signals in a sparse domain robust to the inherent variability of EMG signals. The robust features, which can be extracted in real time, are used to train a neural network and demonstrate a highly accurate and user-independent classifier. Leave-one-out validation testing achieves mean accuracy of 81.9±3.9% and area under the receiver operating characteristic curve (AUC), a measure of overall classifier performance over all possible thresholds, of 92.4±8.9%. The results show the ability of sparse MRMS features to achieve subject independence in decoders, providing opportunities for large-scale studies and more robust EMG-driven applications.

Presented on 06.05.2020 by Felix Grün

Paper:

Humans shape their hands to grasp, manipulate objects, and to communicate. From nonhuman primate studies, we know that visual and motor properties for grasps can be derived from cells in the posterior parietal cortex (PPC). Are non-grasp-related hand shapes in humans represented similarly? Here we show for the first time how single neurons in the PPC of humans are selective for particular imagined hand shapes independent of graspable objects.Wefind that motor imagery to shape the hand can be successfully decoded from the PPC by implementing a version of the popular Rock-Paper-Scissors game and its extension Rock-Paper-Scissors-Lizard-Spock. By simultaneous presentation of visual and auditory cues, we can discriminate motor imagery from visual information and show differences in auditory and visual information processing in the PPC. These results also demonstrate that neural signals fromhumanPPCcan be used to drive a dexterous cortical neuroprosthesis.

Presented on 22.04.2020 by Jonas Hilgenhöner

Paper:

https://www.jneurosci.org/content/jneuro/35/46/15466.full.pdf

In this paper we present a system for catching a flying ball with a robot arm using off-the-shelf components (PC based system) for visual tracking. The ball is ob- served by a large baseline stereo camera, comparing each image to a slowly adapting reference image. We track and predict the target position using an Extended Kalman Filter (EKF), also taking into account the air drag. The calibration is achieved by simply perform- ing a few throws and observing their trajectories, as well as moving the robot to some predefined positions. The robustness of the system was demonstrated at the Hannover Fair 2000.

Presented on 18.03.2020 by Sebastian Doliwa

Paper:

https://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.1.6840&rep=rep1&type=pdf

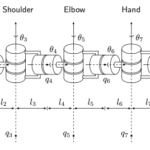

This report describes a mathematical framework based on screw theory to solve the kine- matics and dynamics of an arbitrary open chain manipulator. The configuration of two specific manipulators is described in this framework as an example. For one of the manipulators, a anthropomorphic 7 DoF arm, a closed form solution of the inverse kinematics problem is given.

The second part describes a software simulation framework to model arbitrary open chain manipulators in a physically realistic way. The presented SDK consist of a simulation of the kinematics and dynamics and a openGL visualization of the results.

Presented on 11.03.2020 by Ioannis Iossifidis

Paper:

Objective. Brain–machine interface (BMI) technologies have succeeded in controlling robotic exoskeletons, enabling some paralyzed people to control their own arms and hands. We have developed an exoskeleton asynchronously controlled by EEG signals. In this study, to enable real-time control of the exoskeleton for paresis, we developed a hybrid system with EEG and EMG signals, and the EMG signals were used to estimate its joint angles. Approach. Eleven able-bodied subjects and two patients with upper cervical spinal cord injuries (SCIs) performed hand and arm movements, and the angles of the metacarpophalangeal (MP) joint of the index finger, wrist, and elbow were estimated from EMG signals using a formula that we derived to calculate joint angles from EMG signals, based on a musculoskeletal model. The formula was exploited to control the elbow of the exoskeleton after automatic adjustments. Four able-bodied subjects and a patient with upper cervical SCI wore an exoskeleton controlled using EMG signals and were required to perform hand and arm movements to carry and release a ball. Main results. Estimated angles of the MP joints of index fingers, wrists, and elbows were correlated well with the measured angles in 11 able-bodied subjects (correlation coefficients were 0.81 ± 0.09, 0.85 ± 0.09, and 0.76 ± 0.13, respectively) and the patients (e.g. 0.91 ± 0.01 in the elbow of a patient). Four able-bodied subjects successfully positioned their arms to adequate angles by extending their elbows and a joint of the exoskeleton, with root-mean-square errors <6°. An upper cervical SCI patient, empowered by the exoskeleton, successfully carried a ball to a goal in all 10 trials. Significance. A BMI-based exoskeleton for paralyzed arms and hands using real-time control was realized by designing a new method to estimate joint angles based on EMG signals, and these may be useful for practical rehabilitation and the support of daily actions.

Presented on 04.03.2020 by Stephan Lehmler

Paper:

https://iopscience.iop.org/article/10.1088/1741-2552/aa525f/pdf

In this paper we argue for the fundamental importance of the value distribution: the distribution of the random return received by a reinforcement learning agent. This is in contrast to the common approach to reinforcement learning which models the expectation of this return, or value. Although there is an established body of literature studying the value distribution, thus far it has always been used for a specific purpose such as implementing risk-aware behaviour. We begin with theoretical results in both the policy evaluation and control settings, exposing a significant distributional instability in the latter. We then use the distributional perspective to design a new algorithm which applies Bellman’s equation to the learning of approximate value distributions. We evaluate our algorithm using the suite of games from the Arcade Learning Environment. We obtain both state-of-the-art results and anecdotal evidence demonstrating the importance of the value distribution in approximate reinforcement learning. Finally, we combine theoretical and empirical evidence to highlight the ways in which the value distribution impacts learning in the approximate setting.

Presented on 26.02.2020 by Felix Grün

Paper:

http://proceedings.mlr.press/v70/bellemare17a/bellemare17a.pdf

Traditionally a Brain-Computer Interface (BCI) system uses Electroencephalogram (EEG) signals for communication and control applications. In recent years different biological signals are also combined with EEG signals to produce hybrid BCI devices to overcome the limitation of lower accuracy rates in BCI. This paper presents a new approach of combining EEG and Electromyogram (EMG) signals using the spectral power correlation (SPC) to create a hybrid BCI device for controlling a hand exoskeleton. The proposed method was tested on 10 healthy individuals for measuring its performance level in terms of accuracy. The EEG-EMG SPC based hybrid BCI was trained to classify the grasp attempt and resting states of the user. Upon successful detection of a grasp attempt, the hybrid BCI triggers the hand exoskeleton to perform a finger flexion-extension motion. The proposed EEG-EMG SPC method is also compared with the conventional only EEG based method which uses common spatial pattern (CSP) based spatial filtering. The results have shown that the proposed EEG-EMG SPC method yielded an average accuracy of 90±4.86% while the conventional EEG-CSP method yielded 79.75±5.71%. This significantly (p<0.02) improved performance in terms of classification accuracy indicates that EEG-EMG SPC based hybrid BCI is a better alternative than the conventional EEG-CSP based BCI to generate hand exoskeleton based neurofeedback.

Presented on 19.02.2020 by Marie Schmidt

In this paper, a novel self-organizing fuzzy neural network is proposed that constructed by an input–output mapping and monitored by a hierarchy of validity degrees. We define new operators called validification and devalidification to propagate validity into the six layers of proposed architecture. Self-organizing in structure learning is accomplished through a new measure that depends on error, the number of rules, and validity degrees. Additionally, a parameter learning condition is derived through studying the stability of proposed approach. To evaluate the proposed approach, an impedance controller is designed facing three challenges: different kinds of uncertainty, partial truth, and real-time realization. In the proposed controller, considering the challenge of partial truth, we assume that the manipulator’s inertia is known according to first-principle knowledge while other parts are uncertain. Simulation results show the effectiveness of the proposed approach in the presence of disturbance. The proposed approach emerges as a promising approach by involving self-organizing property and possibility (fuzzy) and validity aspects of information.

Presented on 12.02.2020 by Ayaz Hussain

Paper

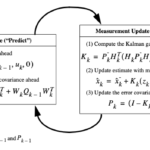

In statistics and control theory, Kalman filtering, also known as linear quadratic estimation (LQE), is an algorithm that uses a series of measurements observed over time, containing statistical noise and other inaccuracies, and produces estimates of unknown variables that tend to be more accurate than those based on a single measurement alone, by estimating a joint probability distribution over the variables for each timeframe. The filter is named after Rudolf E. Kálmán, one of the primary developers of its theory. The Kalman filter has numerous applications in technology. A common application is for guidance, navigation, and control of vehicles, particularly aircraft, spacecraft and dynamically positioned ships. Furthermore, the Kalman filter is a widely applied concept in time series analysis used in fields such as signal processing and econometrics. Kalman filters also are one of the main topics in the field of robotic motion planning and control, and they are sometimes included in trajectory optimization. The Kalman filter also works for modeling the central nervous system’s control of movement. Due to the time delay between issuing motor commands and receiving sensory feedback, use of the Kalman filter supports a realistic model for making estimates of the current state of the motor system and issuing updated commands.

Presented on 22.01.2020 by Sebastian Doliwa

Paper:

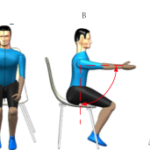

The continuous control of rehabilitation robots based on surface electromyography (sEMG) isa natural control strategy that can ensure human safety and ease the discomfort of human-machine coupling.However, current models for estimating movement of the upper limb focus on two dimensions movement,and models of three dimensions movement are too complex. In this paper, a simple-structure temporalinformation-based model for upper limb motion was proposed. An experiment of the multijoint motionof the upper limb was carried out. We studied the touching motion and the compound task motion of theupper limbs. The touch motor task consists of three tasks, namely, shoulder abduction, shoulder forwardbend and finger-nose. The compound tasks include driving and fetching objects. Three-dimensional upperlimb movement data and sEMG signals of seven upper limb muscles were recorded from seven healthysubjects. Model training was carried out after data preprocessing and feature extraction. Then, 120 s of upperlimb motion data was used to verify the performance of the model proposed in this paper. The estimatedaccuracy of the model for the touch tasks was 0.9171, and it was 0.8109 for compound tasks. Compared tothe multilayer perception (MLP) model, a 13.57% reduction in the root-mean-square error (RSME) wasobserved. The results show that the model has good accuracy for estimating the angular motion of theupper limb and that it has the potential to be applied for three-dimensional motion control in an upper limbmirror-image therapy rehabilitation robot.

Presented on 22.01.2020 by Stephan Lehmler

Paper:

https://ieeexplore.ieee.org/stamp/stamp.jsp?tp=&arnumber=8918182

Objective: Stroke is a leading cause of long-term motor disability. Stroke patients with severe hand weakness do not profit from rehabilitative treatments. Recently, brain-controlled robotics and sequential functional electrical stimulation allowed some improvement. However, for such therapies to succeed, it is required to decode patients’ intentions for different arm movements. Here, we evaluated whether residual muscle activity could be used to predict movements from paralyzed joints in severely impaired chronic stroke patients. Methods: Muscle activity was recorded with surface-electromyography (EMG) in 41 patients, with severe hand weakness (Fugl-Meyer Assessment [FMA] hand subscores of 2.93 ± 2.7), in order to decode their intention to perform six different motions of the affected arm, required for voluntary muscle activity and to control neuroprostheses. Decoding of paretic and nonparetic muscle activity was performed using a feed-forward neural network classifier. The contribution of each muscle to the intended movement was determined. Results: Decoding of up to six arm movements was accurate (>65%) in more than 97% of nonparetic and 46% of paretic muscles. Interpretation: These results demonstrate that some level of neuronal innervation to the paretic muscle remains preserved and can be used to implement neurorehabilitative treatments in 46% of patients with severe paralysis and extensive cortical and/or subcortical lesions. Such decoding may allow these patients for the first time after stroke to control different motions of arm prostheses through muscle-triggered rehabilitative treatments

Presented on 08.01.2020 by Felix Grün

One of the primary ways we interact with the world is using our hands. In macaques, the circuit

spanning the anterior intraparietal area, the hand area of the ventral premotor cortex, and the primary

motor cortex is necessary for transforming visual information into grasping movements. We

hypothesized that a recurrent neural network mimicking the multi-area structure of the anatomical

circuit and using visual features to generate the required muscle dynamics to grasp objects would

explain the neural and computational basis of the grasping circuit. Modular networks with object

feature input and sparse inter-module connectivity outperformed other models at explaining neural

data and the inter-area relationships present in the biological circuit, despite the absence of neural

data during network training. Network dynamics were governed by simple rules, and targeted

lesioning of modules produced deficits similar to those observed in lesion studies, providing a

potential explanation for how grasping movements are generated.

Presented on 18.12.2019 by Marie Schmidt

Error-related potentials are the neural signature of the error processing in the brain. These event-related potentials can be measured via Electroencephalography (EEG) and are present upon both self-made as well as other’s errors. In our project we are interested in the applications of such signals in brain-computer interfaces.

Presented on 03.03.2021 (Aline Xavier Fidêncio)

Links:

Artificial neural networks are universal function approximators. They can forecast dynamics, but they may need impractically many neurons to do so, especially if the dynamics is chaotic. We use neural networks that incorporate Hamiltonian dynamics to efficiently learn phase space orbits even as nonlinear systems transition from order to chaos. We demonstrate Hamiltonian neural networks on a widely used dynamics benchmark, the Hénon-Heiles potential, and on nonperturbative dynamical billiards. We introspect to elucidate the Hamiltonian neural network forecasting.

Presented on 01.07.2020 (Ioannis Iossifidis)

Links: